The 7 traits of a modern metrics stack

In the previous article, my co-founder Mona highlighted why it’s time metrics became first class citizens in the modern data stack. To briefly recap:

- Metric definitions locked inside BI tools result in metric divergence across teams.

- This makes data sharing across reporting, analytics and data science work-streams difficult. Data definitions end up redefined.

- Strong governance and lineage around metric definition is desirable to ensure they remain stable. But we also want the process of metric definition, revision and deployment to be easy, predictable, and repeatable.

We propose the “Modern Metrics Stack”, to give metrics the place they deserve in the larger modern data stack. In this post, I’ll dive deeper into the desired attributes of this modern metrics stack. These learnings are distilled from our experience working with customers at Falkon and with data in our prior roles as analysts, engineers, data scientists and business leaders.

The Modern Metrics Stack

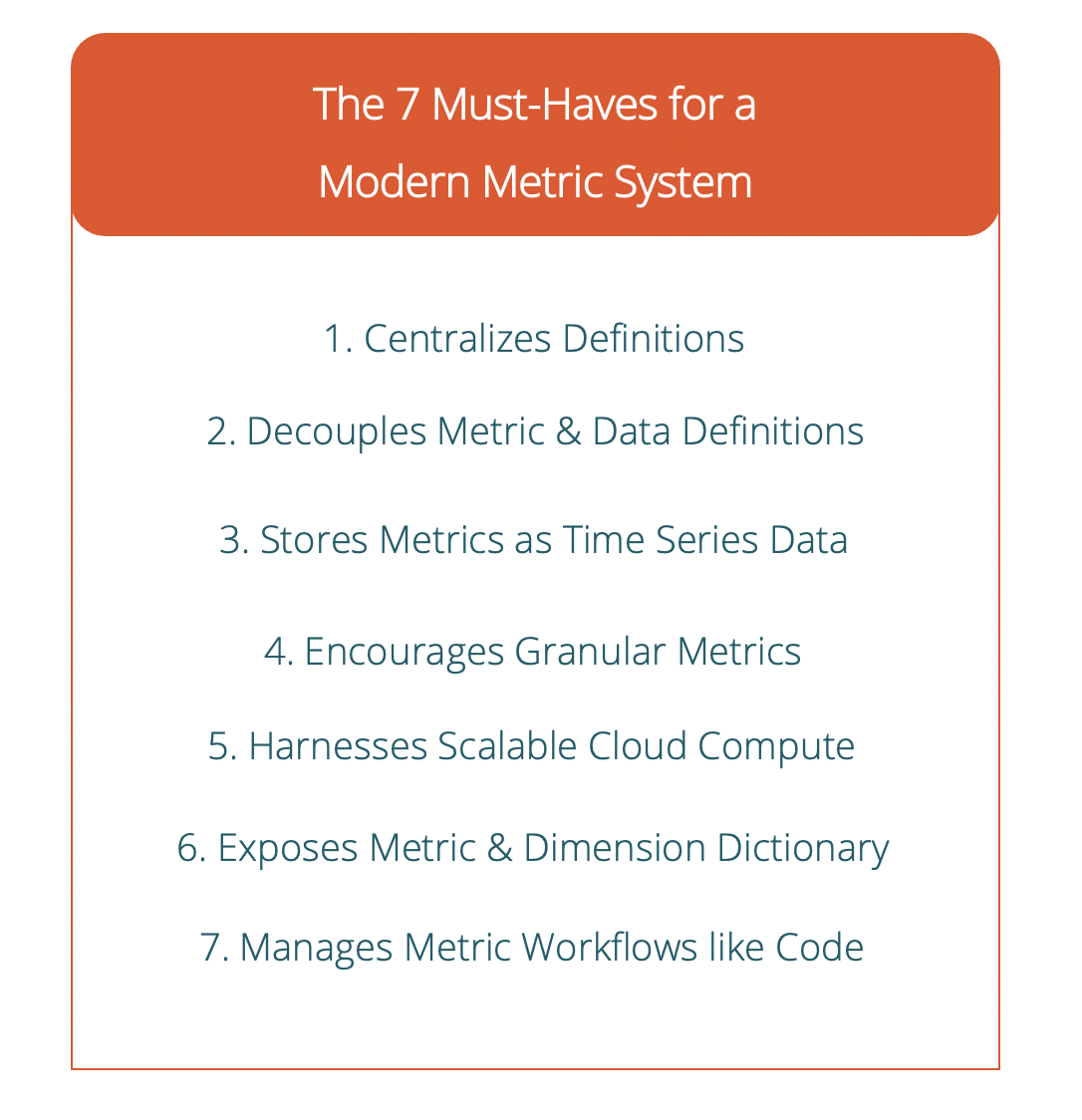

The modern metrics stack is a combination of existing analytics expertise and engineering processes with new workflows and tooling. It brings the same operational visibility and rigor that engineering orgs have adopted around revision, deployments and monitoring to bear on business metrics. Here are the 7 must-have traits of this stack.

Centralizes metric definitions

Metric definitions are centralized within the organization and their definitions are visible to all. This is the first and most important step towards ensuring each team or line of business defines the key 100 business metrics the same way. Making work available and shareable means teams can build upon the work of other teams instead of reinventing the wheel.

Concretely, this means metric definitions live in files that are checked-in to source control (such as Git), just like all other code in your company. They are 100% owned by your company, not by the BI tool. Now they can be referenced by anyone across the company who wants to develop the same metric for their team, or take a dependency for a related metric. This also unlocks all of software engineering’s best practices for metric formula and data definitions (more on this below).

Separates metric formulas from data definitions

In the world of BI, a metric is a succinct summarization of data to make it easily palatable to humans. Inherent to this are two concepts — the formula to be applied to summarize the data (metric formula definition) and the data to be summarized (metric data definition). In most BI tools, these concepts are conflated into one and exist as the combined “metric definition,” locked up inside the BI tool.

We think these should be split apart.

The complex SQL query that produces the rows needed by the metric should be defined separately from the metric definition (the SUM or COUNT or AVERAGE operation performed by the metric). This is a fundamental concept that allows us to centralize data production for a metric and manage metric data lineage in the data warehouse — very similar to how data transformation is handled by DBT.

Now a single SQL query can produce the data needed for the SUM, COUNT, AVERAGE and P99 variant of a metric in the data warehouse. (Example: Revenue, number of transactions, average revenue per transaction, P99 transaction revenue). This consistent source of metric data is now available to all applications — from experimentation platforms to reporting tools to data science workflows.

And metric definition becomes really simple — we specify in config the operation to be performed (SUM, COUNT, AVERAGE or even custom formulas) and this operator is applied against all rows in the table or view in the data warehouse produced by the SQL query. Modern BI tools can read this config directly, and adapters can be written to program existing BI tools with metric definition via API.

The use of source control for metric data definition (i.e. SQL files that live in Git) allows us to iterate and version metric data definition just like code. The SQL files can have embedded functions and macros that can be evaluated with a scripting language like Jinja2 or Starlark prior to the SQL being generated. This allows for elimination of code repetition/duplication by refactoring common code into functions. DBT already enables this for data transformations — the same techniques can also be applied to metric data definitions.

Requires all metrics be time series

In order to determine the impact of your tactical and strategic company initiatives, you must measure the changes to your metrics over time. This is possible only if all your metrics are treated as time series data — where time is a required dimension present on every single metric data point. The value of each metric is computed for the most granular time interval for which the metric value is desired (be it a day, a week, a month etc).

Doing this allows us to look back at past performance and correlate the impact of organizational activity on each metric. We can observe the periodic nature of the business and customer interactions — weekdays vs weekends.We can also make forecasts about future business growth — by building predictive models that encode historical performance.

Expresses metric data at very granular levels

Separating metric definitions from metric data definitions unlocks a new superpower — the ability to generate denormalized data rows for metrics at the most detailed and granular level. These denormalized data rows are then consumed by the BI tool according to the metric definition. Each data row includes all the rich dimensional data from entities participating in the transaction — this allows the tool to drill down into as much detail as desired by the operator with no loss of information.

In the example of a “Daily Transactions” metric, this means we can produce a data row for every single transaction for every single day. Each row contains dimensions associated with the participating user, the participating product SKU, the participating shipping method, the participating payment instrument etc. This allows us to drill into Daily Transactions by any or all of these dimensions, dramatically improving visibility into the business. Because this data is a time series, we can compare performance of “Daily Transactions” over time across whatever combination of dimensions we care about.

Harnesses the power and scalability of cloud data warehouses

Cloud-based data warehouses make the modern metrics stack possible. They offer near-infinite storage and compute capacity with the flexibility of near-instantaneous scale-up/scale-down. This makes it possible to produce a large amount of metric data rows in the data warehouse for consumption by analytics and data science in the most granular form, while also making it feasible to keep all metric history in cold storage at the desired level of granularity.

Exposes metric and dimension dictionaries

A metrics dictionary encodes standardized nomenclature for metrics across the organization. The encoded names are both human and machine-readable. Having a metrics dictionary means teams now have a central place to look up the definition and formula for a metric like “Revenue” to determine how it should be computed. To take that further, any metric definition in the codebase that is tagged with the “revenue” label can now be discovered and inspected for conformance with the centralized definition by automated tools.

Analogously, a dimension dictionary expresses what dimensions are available on each entity present in the data warehouse. This results in easy discoverability of available dimensions to every metric author at the company and ensures consistency in dimension definition and usage across metrics.

Manages metrics like code

Engineering organizations are methodical in their approach to deploying code to production. Unit tests and integration tests are run on each code commit, usually as part of a CI/CD pipeline, and the teams work hard to keep the code in their “main” git branch at a “production-ready” level of code quality.

In the modern metrics stack, the same rigor is applied to metric formula and data definitions. Changes to these definitions are peer-reviewed using a code-review workflow. This allows logic and data lineage bugs to be caught before they make their way to production and cause a fire drill on Monday morning. Code linting tools can be configured to find bugs in your metric definitions. Unintentionally duplicated and divergent metrics can be easily discovered.

Metrics formulas and data definitions are tested just like code — tests measure whether the data being supplied to metrics is violating any of the expectations on the data, and whether the formula for the metric produces the expected metric value for a known set of time intervals and for a known set of dimensions.

Changes to metrics are intentionally “deployed” to production — in this case made available to BI tools. Deployments are deterministic and reproducible, and have no unintended side-effects. Metrics are versioned, and previous versions are not lost when a new version is rolled out. Changes to a metric can be rolled back if they don’t meet expectations.

Tools simplify the process of deploying metrics to production, and automate away the complexity of keeping related metrics consistent. Deployments may be triggered manually or automatically as part of a continuous deployment workflow. The tools offer a clear picture of what is and will be deployed with each change.

The path ahead

Centralizing metric and data definition is the next step in the data democratization journey. It empowers the growing cadre of data-literate business professionals to collaborate and unblock themselves in regards to data and insight access — while maintaining visibility and operational rigor around how data is being summarized and used that data engineering and analytics teams increasingly demand. Putting the framework for collaboration in place before we end up with a hundred different copies of a metric and its data is key. To recap:

- Bringing metric formula and data definition into the organization’s code repository allows us to centralize metric definition for every team to take advantage of.

- Separation of metric formulas from data definitions means we can leverage data warehouses and tools like DBT to build shared data sources for reporting, analytics and data science.

- Adopting modern engineering practices and tools allows us to enforce governance on metric and data lineage, while paradoxically making the process of rolling out new and updated metrics easier than before.

Want more articles like this? Follow Falkon on LinkedIn >